Home

Djamé Seddah

I am a former tenured associate professor at Sorbonne University, now in a full-time senior research position at INRIA Paris in the Almanach team. My interests cover the field of natural language processing—in the past mainly wide-coverage multilingual syntactic analysis and the syntax-semantics interface, and now building robust language models for low-resource languages, specialized domains, etc. I invested a considerable amount of time in the construction of annotated corpora (Sequoia corpus, French Social Media Bank, French Question Bank, Narabizi Treebank, Counter Dataset, etc.) and parsing models for morphologically-rich languages. I participated in the development of the CamemBERT, PagnolXL, CamemBERTa, and ModernCamemBERT language models, as well as character-based models for dialectal and highly noisy languages (CharacterBERT-UGC).

My current research focuses on language models and possible ways of avoiding their weaponization (content detection, bias detection and mitigation, etc.). To this end, together with Benoit Sagot and Eric de la Clergerie, I am extremely involved in the development of the GAPerson series of LLMs that focus on French. You can see the relevant pages and models on Hugging Face's GAPeron collection page; our paper is on arXiv.

Research themes

- LLM safety, interpretability, and backdoor analysis

- Model specialization, instruction tuning, and alignment

- Social and cultural robustness in NLP

- Bias evaluation, fairness, and context-sensitive modeling

- Low-resource, multilingual, and domain-specific language modeling

Quick links

Short bio

Dr. Djamé Seddah is a former tenured associate professor at Sorbonne University, now on a full time senior research position INRIA Paris in the Almanach team. His interests cover the field of natural language processing, in the past mainly wide-coverage multilingual syntactic analysis, the syntax-semantics interface, and now trying to build robust language models, eg. for low-resource languages, specialized domains, etc. A specialist in the construction of annotated corpora (Sequoia corpus, French Social Media Bank, French Question Bank, Narabizi Treebank, Counter Dataset, etc.), he participated in the development of the CamemBERT, PagnolXL, CamemBERTa and ModernCamemBERT language models, as well as character-based models for dialectal and highly noisy languages (CharacterBERT-UGC)

His current research focuses on language models and possible ways of avoiding their weaponization (content detection, bias detection and mitigation, etc.). To this end, together with Benoit Sagot and Eric de la Clergerie, he’s extremely involved in the development of the GAPerson series of LLMs that focus on French. See the relevant pages and model at HuggingFace’s. The paper is on arXiv.

Selected news from my archived homepage

2026

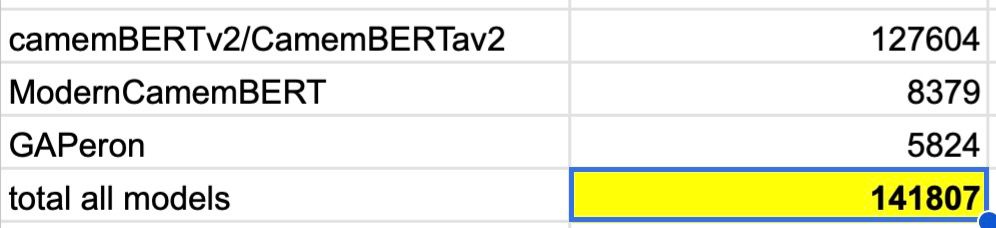

- Recently, I had to produce some metrics on the main models we produced and in which I was involved (since the beginning of Prairie-PSAI, in October 2024) and it’s kind of reassuring to see that our work is having an impact :)

Of course, these indicators come from HuggingFace but still…

More details here.

-

Cool series of papers currently on submission about mechanistic interpretability: (i) Triggers Hijack Language Circuits: A Mechanistic Analysis of Backdoor Behaviors in Large Language Models and (ii) Disentangling meaning from language in LLM-based machine translation. The GAPeron paper is still on submission too !

-

Our multimodal finance benchmark dataset has been accepted at the 7th Financial Narrative Processing Workshop (FNP 2026) ! Very cool and long work I have to say.

-

Welcome to Ana Mosolova who joined my group in January !

2025

- 2 years Postdoc grant obtained from Inria’s Direction des Relations Internationales to work with Inria Chile. Welcome Yanis Karmin!

- Arij Riabi defended her PhD thesis on March 18 !

2024

- HDR defended on September 18.

- BPI projects Scribe and Code Common accepted.

- Action Exploratoire SALM accepted.

- I’m now a PRAIRIE-PSAI chair (Prairie 2) !

2023

- We got an ACL 2023 paper accepted, it’s about a new SOTA LM for French ! Congrats Wissam !

- I got interviewed by France Info about ChatGPT and its impact on student production (Replay here) and by L’Express magazine about the ways to possibly detect language models-generated content (article here) !